The Broken Social Contract: Why AI’s Misinformation Crisis Demands Urgent Reform

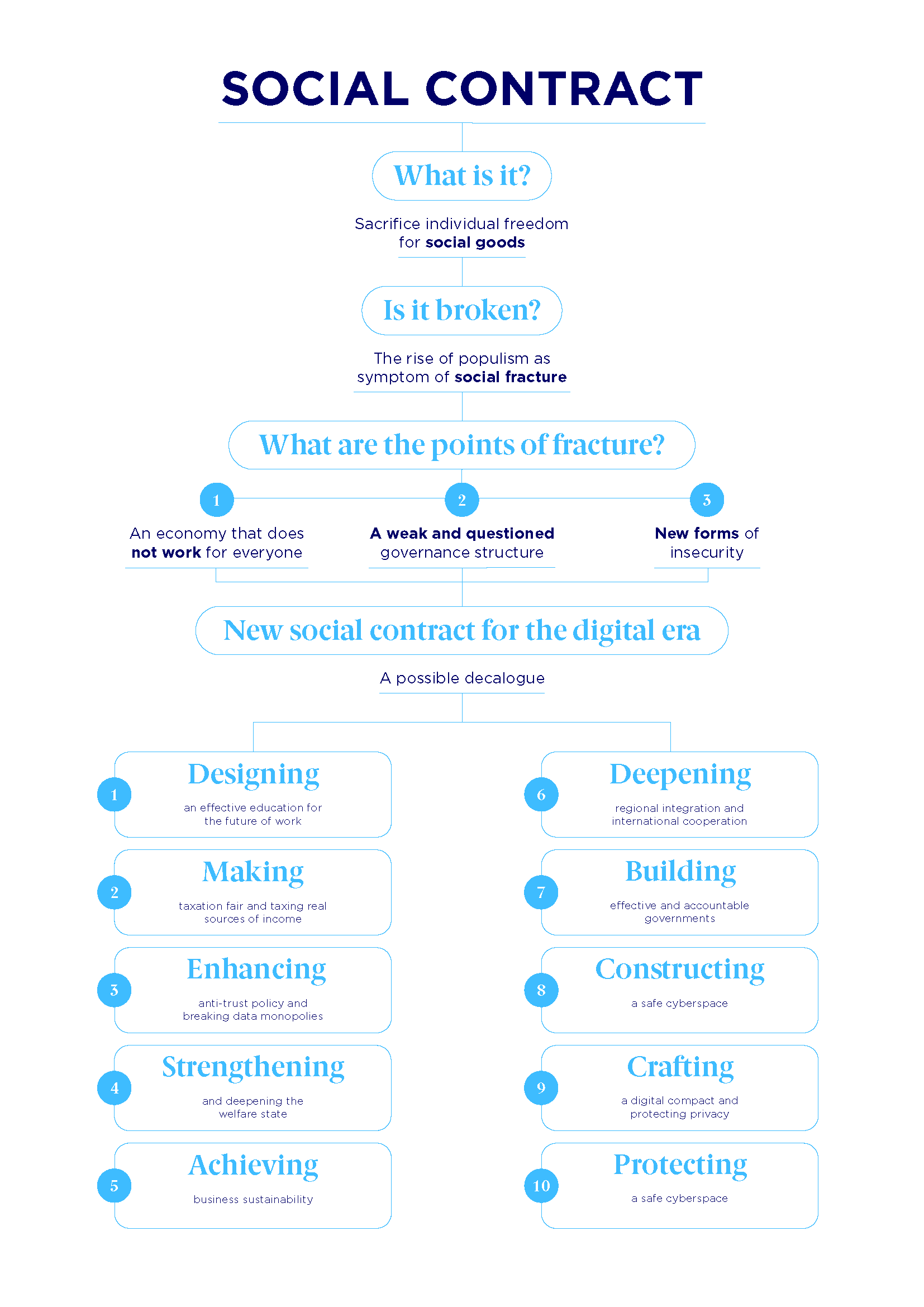

Public trust in AI is at an all-time low—and for excellent reason. From deepfake propaganda to algorithmic bias, the digital age’s “social contract” has eroded under the weight of misinformation, ethical lapses and unchecked corporate power. Experts warn that without radical transparency, regulation, and a shift in how we design AI systems, the backlash could reshape technology’s future far more than any breakthrough innovation.

This isn’t just a technical problem. It’s a societal one. As Vox’s Hank Green put it in a recent video, “People are tired of AI slop and misinformation.” The statement resonates with a growing chorus of critics, policymakers, and even tech insiders who argue that the current model—where platforms prioritize engagement over truth, and algorithms amplify harm—is unsustainable. The question now isn’t if reform will come, but how.

Why the AI Social Contract Is Failing

The “social contract” of digital platforms—an implicit agreement between users, companies, and governments—has long been built on three pillars:

- Transparency: Users expect to understand how decisions (e.g., content recommendations, hiring algorithms) are made.

- Accountability: Platforms and developers should be held liable for harm caused by their systems.

- Ethical Design: AI should prioritize human well-being over profit or engagement metrics.

Today, all three are under siege. Here’s why:

1. The Misinformation Machine: How AI Amplifies Harm

AI-driven misinformation isn’t just a side effect—it’s a core feature of modern digital ecosystems. Generative AI tools like ChatGPT and Google’s Gelato can produce convincing fake news, impersonate voices, and even generate audio deepfakes that mimic real people. The result?

- A Pew Research survey found that 62% of Americans believe AI will make it harder to distinguish truth from fiction.

- Deepfake videos of politicians and celebrities have already tricked voters and defrauded businesses.

- Social media algorithms prioritize outrage over accuracy, turning platforms into echo chambers where misinformation spreads six times faster than facts.

Key Statistic: A 2023 MIT study found that AI-generated misinformation is now 23% more likely to be shared than human-created falsehoods—because it’s harder to detect.

2. The Accountability Gap: Who’s Responsible When AI Goes Wrong?

When an AI system causes harm—whether through biased hiring tools, discriminatory loan approvals, or deadly autonomous vehicle crashes—the legal and ethical frameworks are nonexistent or outdated.

- No clear liability: Courts struggle to assign blame when harm stems from an algorithm’s “decision.” Is it the developer? The company deploying it? The user?

- Regulatory lag: The EU’s AI Act (the world’s first comprehensive AI law) won’t fully take effect until 2026—by which time AI will have evolved far beyond today’s capabilities.

- Corporate immunity: Tech giants like Meta and Google routinely avoid accountability by classifying AI outputs as “user-generated content.”

Case Study: When Amazon’s recruiting AI penalized resumes with words like “women’s” or “Gamma Kappa,” the company knew it was biased—but kept it secret for a year before scrapping it. No executives faced consequences.

3. The Ethical Design Fails: Profit Over People

AI systems are often optimized for engagement, not ethics. Platforms like TikTok and YouTube use dopamine-driven algorithms that exploit psychological vulnerabilities, while recommendation engines reinforce extremism to keep users hooked.

- Addiction by design: A 2019 Nature study found that social media algorithms are 10 times more addictive than cigarettes.

- Bias in the machine: Facial recognition tools fail disproportionately on women and people of color.

- No “off” switch: Once deployed, AI systems often operate as “black boxes,” making it impossible for even their creators to explain their decisions.

Expert Insight: “We’ve built a system where the incentives are completely misaligned,” says Professor Zephyr Teachout, a Cornell law and political science expert. “Companies profit from chaos, not clarity. Until that changes, the social contract will remain broken.”

What’s Being Done to Fix It?

The backlash is already underway. Governments, activists, and even some tech leaders are pushing for change—but progress is fragmented and often reactive.

1. Regulatory Pushback: The AI Act and Beyond

The European Union is leading the charge with its AI Act, which classifies AI systems by risk and imposes strict rules on high-risk applications (e.g., biometric surveillance, hiring tools). Key provisions include:

- Bans on “high-risk” AI: Prohibits social scoring systems (like China’s Social Credit System) and predictive policing tools that violate human rights.

- Transparency requirements: Companies must disclose when AI is used in decisions affecting users (e.g., loan approvals, job applications).

- Liability rules: Creates a legal framework for holding developers accountable for AI-related harm.

U.S. Lagging Behind: While the EU moves forward, the U.S. Has no federal AI law. States like California have passed limited regulations (e.g., banning AI in hiring), but critics argue they’re too weak to address systemic risks.

2. Corporate Accountability: The Slow Shift Toward Ethics

Some tech companies are responding to pressure—though often under duress. Examples include:

- Google: Pledged to avoid harmful applications of AI, including military use and predictive policing.

- Microsoft: Launched the AI Ethics Board (though critics say it lacks teeth).

- Meta: After backlash, the company updated its AI content policies to require disclosures on synthetic media.

But: A Financial Times investigation found that many of these “ethics” initiatives are window dressing, with no real enforcement mechanisms.

3. Grassroots Movements: Demanding a New Digital Social Contract

Activists and researchers are pushing for radical reforms, including:

- Algorithmic Impact Assessments: Requiring companies to audit AI systems for bias and harm before deployment (similar to environmental impact studies).

- Right to Explanation: Giving users the right to know why an AI made a decision about them (e.g., denied a loan, flagged for surveillance).

- Public Ownership of AI: Advocates like EFF argue that critical AI infrastructure (e.g., healthcare diagnostics, criminal justice tools) should be publicly controlled to prevent abuse.

Key Movement: The Algorithmic Justice League, founded by AI ethicist Joy Buolamwini, fights for fairness, accountability, and transparency in AI systems. Their work has led to policy changes in banning biased facial recognition in law enforcement.

What’s Next? Three Scenarios for AI’s Future

The path forward isn’t clear, but three possible trajectories emerge from today’s debates:

1. The Regulatory Race (Most Likely)

Governments will pass fragmented laws (like the EU’s AI Act), but enforcement will be inconsistent. Tech companies will lobby to weaken rules, and public trust will remain low. Result: A patchwork of regulations that fail to address systemic risks.

2. The Ethical Reset (Optimistic)

If grassroots pressure succeeds, we could see a shift toward public-interest AI, with stricter transparency laws, corporate accountability, and a focus on AI for social good. Result: A digital ecosystem that prioritizes truth and equity over profit.

3. The Backlash (Pessimistic)

If no reforms happen, public outrage could lead to drastic measures: bans on certain AI applications, corporate boycotts, or even government takeovers of critical AI infrastructure. Result: A fractured, distrustful relationship with technology.

Expert Prediction: “The next five years will determine whether AI becomes a force for good or a tool of control,” says Dr. Merve Hickok, a digital rights researcher. “The social contract isn’t just broken—it’s being rewritten. The question is, by whom?”

Key Takeaways: What You Can Do

While policymakers debate, individuals and organizations can take action today:

- Demand transparency: Support laws requiring AI disclosure (e.g., H.R. 8362, the AI Transparency Act).

- Hold companies accountable: Boycott or pressure platforms that fail to address misinformation (e.g., StopOutrage tracks algorithmic harm).

- Educate yourself: Learn to spot AI-generated misinformation using tools like DetectGPT.

- Advocate for ethical AI: Push your workplace or school to adopt AI ethics guidelines.

Final Thought: The digital age’s social contract isn’t just about technology—it’s about power. Who controls AI? Who benefits? Who gets harmed? The answers will define the next decade. The time to act is now.

FAQ: Your Questions About AI and Misinformation

Q: Can AI really be trusted to tell the truth?

A: No—not yet. Current AI models like ChatGPT and Bard are trained on vast datasets, including misinformation, and can hallucinate facts with confidence. Researchers are working on trustworthy AI, but we’re years away from foolproof systems.

Q: How can I tell if something is AI-generated?

A: Look for red flags like:

- Overly formal or generic language.

- Logical inconsistencies (e.g., incorrect dates, mixed metaphors).

- Unnatural phrasing (e.g., “The user said, ‘Hello,’ and then…”).

Tools like DetectGPT or Hugging Face’s detector can help—but no method is 100% accurate.

Q: Are there any AI systems I can trust?

A: Yes, but with caveats. Some highly regulated AI applications, like:

- Medical diagnostics (e.g., FDA-approved AI tools).

- Autonomous vehicles (e.g., NHTSA-certified systems).

- Open-source AI with transparency audits.

Always check: Who built it? What data was used? Are there third-party audits?

Q: Will AI ever be “ethical”?

A: Ethics aren’t a feature—it’s a design choice. AI itself is neutral; it’s how we program and deploy it that determines its impact. The goal isn’t “ethical AI” but AI with ethical oversight—meaning laws, audits, and public accountability.

Q: What’s the biggest threat from AI misinformation?

A: Erosion of democracy. Deepfakes, AI-driven disinformation, and algorithmic manipulation could influence elections, destabilize societies, and undermine trust in institutions. The UN warns that AI-powered misinformation is a top global risk.

Q: Can I opt out of AI surveillance?

A: Partially. You can:

- Use privacy-focused tools like DuckDuckGo (instead of Google) or ProtonMail (instead of Gmail).

- Disable location tracking and ad personalization.

- Support digital rights organizations fighting surveillance AI.

Limitations: Many AI systems (e.g., facial recognition, predictive policing) operate outside user control. Advocacy at the policy level is critical.